It says no to autonomous weapons and mass surveillance, but it is used for raids in Iran. Who really controls artificial intelligence? The paradox of ethics in the age of algorithms.

Last week, on 24 February 2026, US Secretary of Defence Pete Hegseth asked Anthropic CEO Dario Amodei to hand over his AI model without any restrictions for legitimate military use by Friday, invoking the Defence Production Act, which dates back to the Cold War era.

The company refused and last Thursday published its reasons on its website, 24 hours after the ultimatum: “I deeply believe in the existential importance of using artificial intelligence to defend the United States and other democracies and to defeat our autocratic adversaries.” The statement began with the obvious, namely that the start-up had always worked towards this goal, alongside the Pentagon, having signed a £200 million agreement in July 2025 to prototype cutting-edge AI capabilities in support of US national security.

In his rejection letter, Amodei explains his reasons: “In a small number of cases, we believe that AI could undermine, rather than defend, democratic values. Some uses are simply beyond what today’s technology can do safely and reliably. Two such use cases have never been included in our contracts with the Department of Defence, and we believe they should not be included now.” The cases would involve domestic mass surveillance and fully autonomous weapons.

Now here’s the thing. The US Department of Defence sent a memorandum to the Pentagon on 9 January stating that it would only enter into contracts with artificial intelligence companies that agreed to ‘any lawful use’, removing security measures in the above-mentioned cases. But the memorandum was published in early January, and Anthropic’s note only appeared on Thursday 26 February, immediately after the Wall Street Journal published an article on the previous Sunday, 22 February, revealing Anthropic’s use in the mission in Venezuela to capture Maduro, and immediately after the meeting between Pete Hegseth and Amodei on Tuesday 24 February, in which the ultimatum was issued.

Last Thursday’s note highlights the threats that allegedly arose during this latest meeting: Hegseth allegedly threatened to remove Anthropic’s AI from government systems if they continued to maintain those security measures; not only that, but with a second threat, they would designate Anthropic’s AI as a ‘risk to the supply chain’. However, these last two threats appear to be inherently contradictory: one labels its AI, Claude, as a security risk; the other labels Claude as essential to national security.

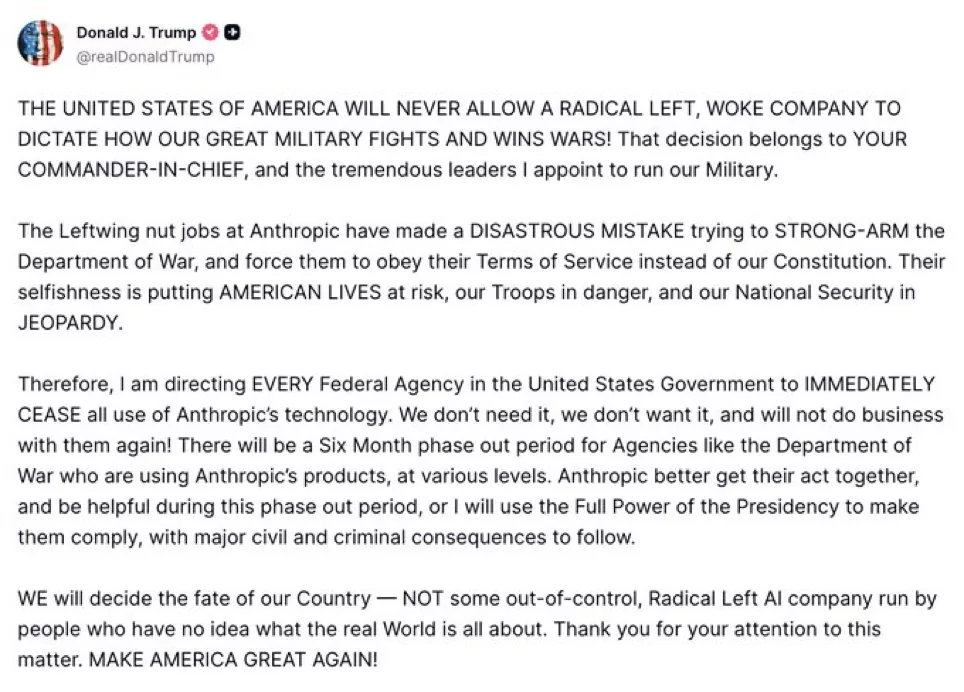

Last Friday, the day of the ultimatum, Amodei immediately framed the issue in ethical terms in an interview with CBS News: “It’s about the principle of defending what is right.” So, in a post published on Truth Social, President Donald Trump responded by ordering federal agencies to stop using the company’s products and granting a six-month period to complete the transition.

All this paved the way for the invasion of competitors, such as OpenAI, which on the same day announced that it had entered into an agreement with the Pentagon to use its AI in classified contexts, in accordance with its security requirements. OpenAI recently hired Peter Steinberger, creator of OpenClaw for the next generation of AI agents, narrowing the gap with Anthropic: it should be noted that OpenClaw has always used Claude models until now.

However, on Saturday 28 February 2026, the joint attack by the US and Israel on Iran, apparently unexpected by all countries, caused 201 deaths and 747 injuries, damage to military and nuclear infrastructure, and the death of Supreme Leader Ali Khamenei. Estimates vary, however: the Iranian government speaks of over 3,000 deaths, while US and HRANA sources indicate higher figures, up to 7,000 or 32,000. Iran then responded with missiles and drones, causing one death and 22 injuries in Tel Aviv, destroying US bases with drones over Oman and elsewhere, and then ‘closing’ the Strait of Hormuz, with inevitable economic impacts on oil transport and marine insurance.

However, according to the Wall Street Journal, despite Anthropic’s denial, the US government also used its AI for intelligence analysis and operational war simulations in connection with the attack on Iran.

In recent days, however, Anthropic’s Claude chatbot has reached the top spot in downloads on Apple’s App Store, surpassing OpenAI’s ChatGPT for the first time. On Monday, some of the company’s artificial intelligence apps experienced a brief crash due to what the company called ‘unprecedented demand’. Fans are literally taking to the streets to express their appreciation.

There are many points to consider behind this whole issue. But one is particularly relevant: when you hide behind ethical principles, it is difficult to discern and maintain those principles over the long term. Of course, Amodei left OpenAI with his sister Daniela (both pictured in the media kit available on the Anthropic website) due to ethical differences over AI security and the commercial race, founding Anthropic as a ‘responsible’ alternative to OpenAI itself. Of course, Anthropic may now have lost the entire US government as a customer, marking a new chapter in the relationship between Washington and Silicon Valley. But its artificial intelligence, its product, has still been used by the government despite the CEO’s dissent. This underlines how every single choice has weight, repercussions, and even an idea, albeit legitimate, because ethical, political or economic, every single dollar can, at the end of the day, be traded.

ALL RIGHTS RESERVED ©