Table of contents

Physical AI represents the most significant evolution in contemporary artificial intelligence, transforming systems from mere data analysers into agents capable of perceiving, reasoning and acting within the physical world. Unlike traditional automation – which is rigid, pre-programmed and isolated – physical AI integrates advanced sensory perception, autonomous reasoning models and real-time control to create adaptive, intelligent and collaborative systems.

In the manufacturing sector, this transition represents an extraordinary opportunity for European and Italian companies: not only to automate repetitive tasks, but also to enable new forms of human-machine collaboration, significantly reduce production reconfiguration times, and address the flexibility challenges that ‘high-mix, medium-volume’ production has posed for decades.

According to the World Economic Forum, AI-powered systems achieve up to 40% greater operational efficiency than traditional automation[1]. The global industrial robotics market is set to see 609,000 new installations by 2026[2], with collaborative robotics representing the fastest-growing segment. The impact extends beyond productivity: from worker safety to reduced carbon emissions, and from product quality to operational throughput.

This in-depth analysis examines the technical architecture of physical AI, the fundamental differences between it and purely digital AI, the enabling components – from mechatronics to edge control – and the emerging industrial use cases that are redefining competitiveness, safety and sustainability in manufacturing.

What is physical AI?

Definition

Physical AI is an artificial intelligence system that perceives, reasons, plans and acts directly within the physical world, using sensors, real-time distributed processing and intelligent actuators. Unlike traditional AI models, which operate exclusively on data and algorithms in digital environments, physical AI is embodied: its intelligence resides in a physical body – a robot, machine, vehicle or infrastructure – that continuously interacts with its surroundings [3].

Jensen Huang, CEO of NVIDIA, summarised the evolution of AI in four stages during CES 2025[4]:

- AI perception: recognises images, voices and patterns in static data;

- generative AI: creates text, images and code based on prompts;

- AI agents: they reason, plan and act autonomously in digital environments;

- Physical AI: perceives, reasons, plans and acts in the physical world.

In practical terms, a physical AI system consists of three integrated architectural layers[4]:

| Layer | Function | Technologies |

| Perception | Interpreting the environment using sensors and data fusion | Computer vision, LIDAR, tactile sensors, audio |

| Reasoning | Planning the optimal course of action in real time using AI models | Neural networks, foundation models, reinforcement learning |

| Action | Performed using actuators and locomotion systems | Motors, robotic arms, positioning systems, AMRs (Autonomous Mobile Robots) |

What physical AI is not

It is crucial to distinguish physical AI from related but conceptually different phenomena:

This is not traditional automation. Conventional industrial automation systems operate according to rigid, pre-programmed logic: a 6-axis robot follows a fixed path to perform a specific task. If the task changes, the entire system must be reprogrammed, resulting in significant downtime costs. Physical AI, on the other hand, adapts, learns and reacts to unpredictable situations without the need for manual reprogramming[5].

It is not simply robotics. Traditional robotics is ‘blind’ to environmental changes. Physical AI integrates advanced perception, autonomous reasoning and real-time adaptive control, transforming the robot from an isolated machine into an intelligent agent capable of collaborating with humans and other systems[3].

It’s not just about AI in the cloud. Whilst generative AI relies on models trained in data centres and accessed via APIs, physical AI requires local, ultra-low-latency inference directly on the edge device. A critical safety decision (emergency braking, force adjustment) cannot tolerate network latency or dependence on the cloud [6].

Key differences between digital AI and embodied AI

Digital AI resides in the cloud and data centres, processes structured data, static images or text, and generates informational outputs, with latencies of a few seconds that are generally acceptable. Embodied AI brings intelligence to robots and edge devices, perceives the environment via sensors in real time, and acts directly in the physical world with latencies in the order of milliseconds, which are critical for safety. It is designed to adapt online during operation, but introduces physical risks (collisions, forces, movements) that require real-time monitoring and control. Architectures, scalability and network dependency vary depending on the type of application and use case.

Implicazioni architetturali

La physical AI richiede un’architettura distribuita a più livelli, non centrata solo sul cloud:

- High-performance computing: GPU infrastructure or specialised servers are used to train foundation models and world simulators for robotics and industrial control;

- Simulation level: digital twin environments and physics-accurate simulations enable systems to perform millions of iterations in accelerated time, developing skills that can be transferred to the real world;

- Edge computing: local devices on machines and robots run inference models with latencies in the tens of milliseconds, enabling real-time decision-making.

Unlike digital AI, which often processes batches of historical data, physical AI must fuse multimodal streams (vision, LiDAR, tactile and force sensors) in real time within time windows of less than 100 ms, using algorithms optimised for edge computing.

Enabling Technical Components

The key technical components of Physical AI are:

- multimodal sensors (cameras, LiDAR – light detection and ranging, radar, tactile, inertial) for real-time environmental perception;

- edge AI and distributed computing (e.g. NVIDIA Jetson/Orin) for low-latency local processing ( < > 50 ms); integrated mechatronics with actuators, motors and robotic arms for physical execution;

- agent-based models for reasoning, planning and continuous learning;

- digital twins and physics-accurate simulations (e.g. Omniverse) for safe and scalable training.

This stack transforms passive machines into adaptive autonomous systems that enhance human-machine interaction.

Industrial applications of physical AI are revolutionising manufacturing, with a focus on adaptive automation and operational resilience.

Assembly and flexible manufacturing

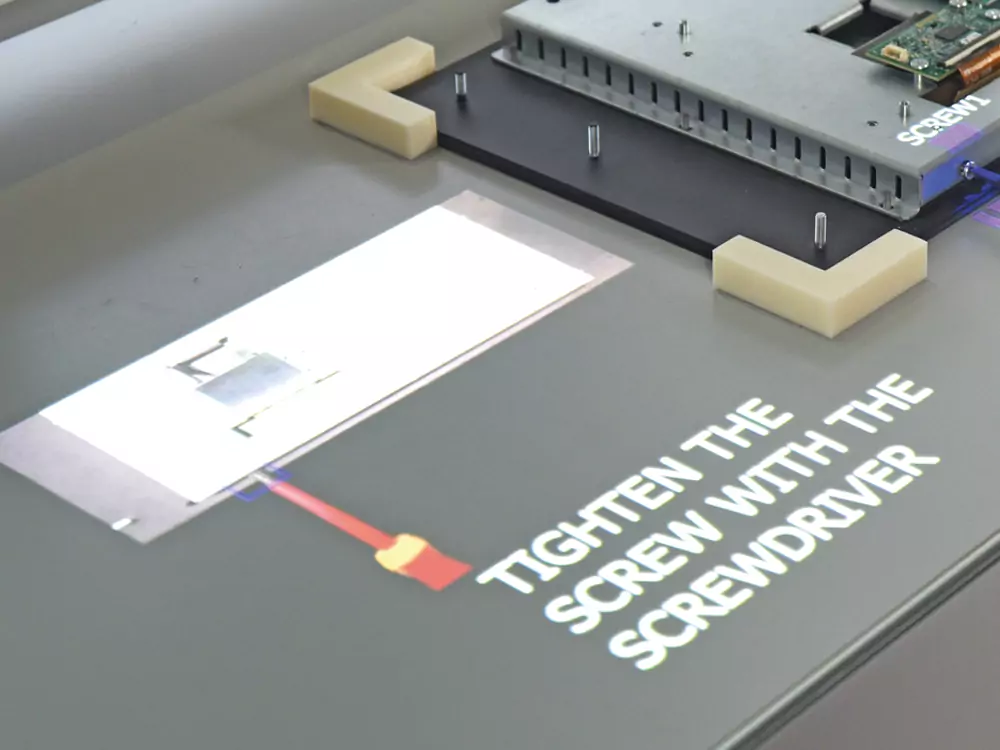

- Collaborative robots (cobots) and humanoid robots for high-mix/low-volume assembly without reprogramming: screwdriving, cable insertion, and handling of variable components. Foxconn has reduced cycle times by 20–30%, errors by 25% and OPEX by 15%.

- AI-driven quality inspection using computer vision: real-time defect detection, 40% higher yield compared to traditional automation.

Logistica e Material Handling

- Adaptive AMRs and AGVs for dynamic palletising, anti-collision path planning: +10% travel efficiency (Amazon).

- Autonomous fleet management in warehouses: 25% increase in throughput, reduced downtime.

Predictive Maintenance and Monitoring

- Digital twins and sensors for proactive maintenance of machinery and production lines: PepsiCo identifies 90% of pre-failure anomalies.

- Process control using AI physics: real-time optimisation in Siemens factories (Erlangen pilot project 2026).

Collaborative Robotics and Safety

- Force-sensitive cobots for finishing, polishing and human tasks: human-machine integration, 30% increase in safety.

- AI-driven factories: Siemens, Foxconn, Hyundai Heavy Industries, KION and PepsiCo are testing autonomous production lines.

Note: The author is CTO of e-Novia

References

[1] World Economic Forum. (2025). Physical AI: Powering the New Age of Industrial Operations. https://reports.weforum.org/docs/WEF_Physical_AI_Powering_the_New_Age_of_Industrial_Operations_2025.pdf

[2] International Federation of Robotics (2025). Global Robotics Survey. An estimated 609,000 robotic installations are expected by 2026.

[3] Fujitsu Research Institute. (2026, January 16). The Rise of Physical AI: From Humanoid Robotics to Industrial Reality. https://global.fujitsu/-/media/Project/Fujitsu/Fujitsu-HQ/insight/tl-rise_of_physical_ai-20260116/The-Rise-of-Physical-AI—From-Humanoid-Robotics-to-Industrial-Reality-en.pdf

[4] NVIDIA. (2025, January). CES 2025 Keynote: The Next Wave of AI. Discorso di Jensen Huang, CEO NVIDIA, illustrating the evolution from Perception AI → Generative AI → Agentic AI → Physical AI.

[5] e-Novia. (2025). Physical AI: Why Italy Can Become a World Leader in Physical Artificial Intelligence https://e-novia.it/news/italia-leader-mondiale-physical-ai

[6] Deloitte. (2025, December). Humanoid Robots and Beyond: Physical AI in Enterprise. https://www.deloitte.com/us/en/insights/topics/technology-management/tech-trends/2026/physical-ai-humanoid-robots.html

[7] Meta Intelligence. (2025, November 28). Tesla Optimus, Figure 02 & NVIDIA Isaac Status. Analysis of humanoid robots in commercial deployment. https://www.meta-intelligence.tech/en/insight-physical-ai

[8] Wevolver. (2026, March 9). The 2026 Edge AI Technology Report—Chapter 5: Physical AI & Embodied AI. https://www.wevolver.com/article/the-2026-edge-ai-technology-report/physical-ai-embodied-ai

[9] LinkedIn. (2026, January 15). Embodied AI & Robotics in 2026: Where Intelligence Meets Action. https://www.linkedin.com/pulse/embodied-ai-robotics-2026-where-intelligence-meets-action-te1kc

[20] DT Engineering. (2026, March 2). Collaborative Robots (Cobots) in Manufacturing: Safety & Efficiency. https://www.dtengineering.com/articles/collaborative-robots-cobots-transforming-manufacturing-safety-and-efficiency

[21] Deloitte. (2025, December 9). Onboard computing and processing: Neural Processing Units for edge AI. Discussione della capacità di NPU per low-latency inference. https://www.deloitte.com/us/en/insights/topics/technology-management/tech-trends/2026/physical-ai-humanoid-robots.html

[22] International Federation of Robotics / DT Engineering. (2026). Definition and characteristics of collaborative robots according to IFR standards. https://www.dtengineering.com/articles/collaborative-robots-cobots-transforming-manufacturing-safety-and-efficiency

[23] ForECR. (2026, February 16). Edge AI in Industrial Automation: Real-Time Control and Predictive Analytics. https://www.forecr.io/blogs/embedded-systems/edge-ai-in-industrial-automation-real-time-control-and-predictive-analytics

[24] Meta Intelligence. (2025, November 28). BMW case study con Figure 02, ITRI pilot scenarios. https://www.meta-intelligence.tech/en/insight-physical-ai

[25] DOBOT. (2025, December 18). Collaborative Robots (Cobots) in Manufacturing: The Future. CR5S con 3D vision per quality inspection e sorting. https://www.dobot-robots.com/insights/news/collaborative-robots-in-manufacturing.html

[26] ARM. (2025, September 22). Seven Edge AI Use Cases Powering Real Life—Smart Manufacturing section. https://newsroom.arm.com/blog/seven-edge-ai-use-cases-powering-real-life

[27] AuroNIQ Robotics. (2026, February 6). WEF-Davos 2026: Physical AI and Robotics as Central Future Trend. https://auroniq-robotics.com/en/wef-davos-2026-physical-ai-und-robotics-als-zentraler-zukunftstrend/

[28] NCBI / PMC. (2024, December 1). Integrating collaborative robots in manufacturing, logistics, and… Survey di implementazione cobot in tre settori. https://pmc.ncbi.nlm.nih.gov/articles/PMC11646840/

[29] Automate.org. (2026, March 8). AI at the Edge: From Vision to Action. Industrial AI at the edge per machine vision, robotics control, AMR navigation. https://www.automate.org/videos/ai-at-the-edge-from-vision-to-action

[30] YouTube. (2025, December 31). These New AI Robots Are About to Become Real in 2026. https://www.youtube.com/watch?v=t-GeDuS3qWw

[31] ScienceDirect. (2020). Safety assurance mechanisms of collaborative robotic systems in manufacturing. https://www.sciencedirect.com/science/article/abs/pii/S0736584520302337

[32] ÈconomyMagazine. (2026, March 6). Robots and AI: the answer to young people leaving the manufacturing sector. https://www.economymagazine.it/robot-e-intelligenza-artificiale-nelle-fabbriche-italiane-la-risposta-alla-fuga-dei-giovani-dalla-manifattura

[33] ALAScom. (2025, December 3). Robotics in 2026: the 5 trends that will transform manufacturing. https://www.alascom.it/ultime-notizie/robotica-nel-2026-le-5-tendenze-che-cambieranno-il-modo-di-produrre-e-lavorare

[34] AzzurroDigitale. (2025, April 2). AI Agents and the Future of Manufacturing—Towards Smart and Sustainable Factories. Siemens Industrial Copilot case study: the impact of AI agents on productivity. https://www.azzurrodigitale.com/gli-ai-agents-e-il-futuro-della-manifattura-verso-fabbriche-intelligenti-e-sostenibili/

[35] TiNovaMag. (2025, October 21). Mechatronics: The 6 technologies for the factory of the future. https://tinnovamag.com/meccatronica-le-6-tecnologie-per-la-fabbrica-del-futuro/

[36] GlobalInsightServices. (2025, September 30). LIDAR in Industrial Automation Market Analysis and Forecast to 2034. Market size e trend per LIDAR technology. https://www.globalinsightservices.com/reports/lidar-in-industrial-automation-market/

[37] Scopieno. (2024). LIDAR and AI Based Surveillance of Industrial Processes. https://sciendo.com/2/v2/download/article/10.2478/ttj-2023-0002.pdf

[38] TIM Enterprise. (2025, March 24). 5G is boosting productivity in the manufacturing sector. The impact of 5G and AI on productivity and sustainability. https://www.gruppotim.it/it/archivio-stampa/mercato/2025/CS-TIM-Enterprise-BIREX-25-03-25.html

[39] PoliMi / Rocco. (Accesso libero). Automatic controls for mechatronics. https://rocco.faculty.polimi.it/cam/informatica_controllo.pdf

[40] Allerin. (2023, June 26). Computer Vision for Industrial Automation: Applications and Benefits. https://www.allerin.com/blog/computer-vision-for-industrial-automation-applications-and-benefits/

[41] Altair Engineering. (1999-ongoing). Solutions for Mechatronic Systems. https://altairengineering.it/mechatronics

[42] LinkedIn. (2025, July 27). Industrial Vision Sensors Market Metrics | Analysis by Type. https://www.linkedin.com/pulse/industrial-vision-sensors-market-metrics-analysis-dq1ce

[43] TecnoForIndustry. (2022, December 6). Mechatronics: What it is, what it is used for, and its areas of application. https://tecno4industry.it/meccatronica/

[44] Raise Summit. (2026, March 12). 20 Physical AI Companies to Watch in 2026. https://www.raisesummit.com/de/post/20-physical-ai-companies-to-watch-in-2026

[45] Scalvini, L., Adrodegari, F., & Saccani, N. (2026, February). Everything-as-a-Service nel manifatturiero: Framework per adozione XaaS. Articolo pubblicato su Production & Manufacturing Research, Università di Brescia (ASAP Center), finanziato da MICS/PNRR/NextGenerationEU. https://www.innovationpost.it/modelli-business/everything-as-a-service-xaas-nel-manifatturiero-come-trasformare-la-vendita-di-prodotti-in-servizi/

ALL RIGHTS RESERVED ©